Cyber Security News Aggregator

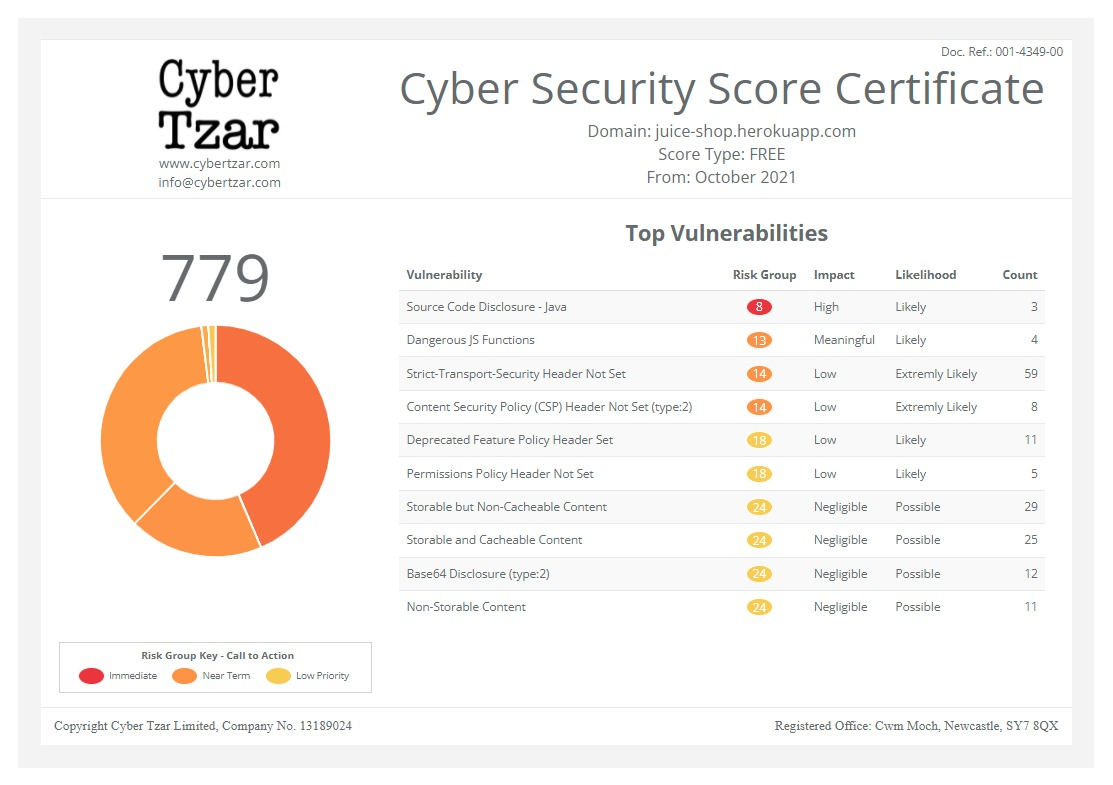

.Cyber Tzar

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Manipulating Machine-Learning Systems through the Order of the Training Data

published on 2022-05-25 15:30:25 UTC by Bruce SchneierContent:

Yet another adversarial ML attack:

Most deep neural networks are trained by stochastic gradient descent. Now “stochastic” is a fancy Greek word for “random”; it means that the training data are fed into the model in random order.

So what happens if the bad guys can cause the order to be not random? You guessed it—all bets are off. Suppose for example a company or a country wanted to have a credit-scoring system that’s secretly sexist, but still be able to pretend that its training was actually fair. Well, they could assemble a set of financial data that was representative of the whole population, but start the model’s training on ten rich men and ten poor women drawn from that set then let initialisation bias do the rest of the work.

Does this generalise? Indeed it does. Previously, people had assumed that in order to poison a model or introduce backdoors, you needed to add adversarial samples to the training data. Our latest paper shows that’s not necessary at all. If an adversary can manipulate the order in which batches of training data are presented to the model, they can undermine both its integrity (by poisoning it) and its availability (by causing training to be less effective, or take longer). This is quite general across models that use stochastic gradient descent.

Research paper.

https://www.schneier.com/blog/archives/2022/05/manipulating-machine-learning-systems-through-the-order-of-the-training-data.html

Published: 2022 05 25 15:30:25

Received: 2022 05 25 15:46:42

Feed: Schneier on Security

Source: Schneier on Security

Category: Cyber Security

Topic: Cyber Security

Views: 3