Welcome to our

Cyber Security News Aggregator

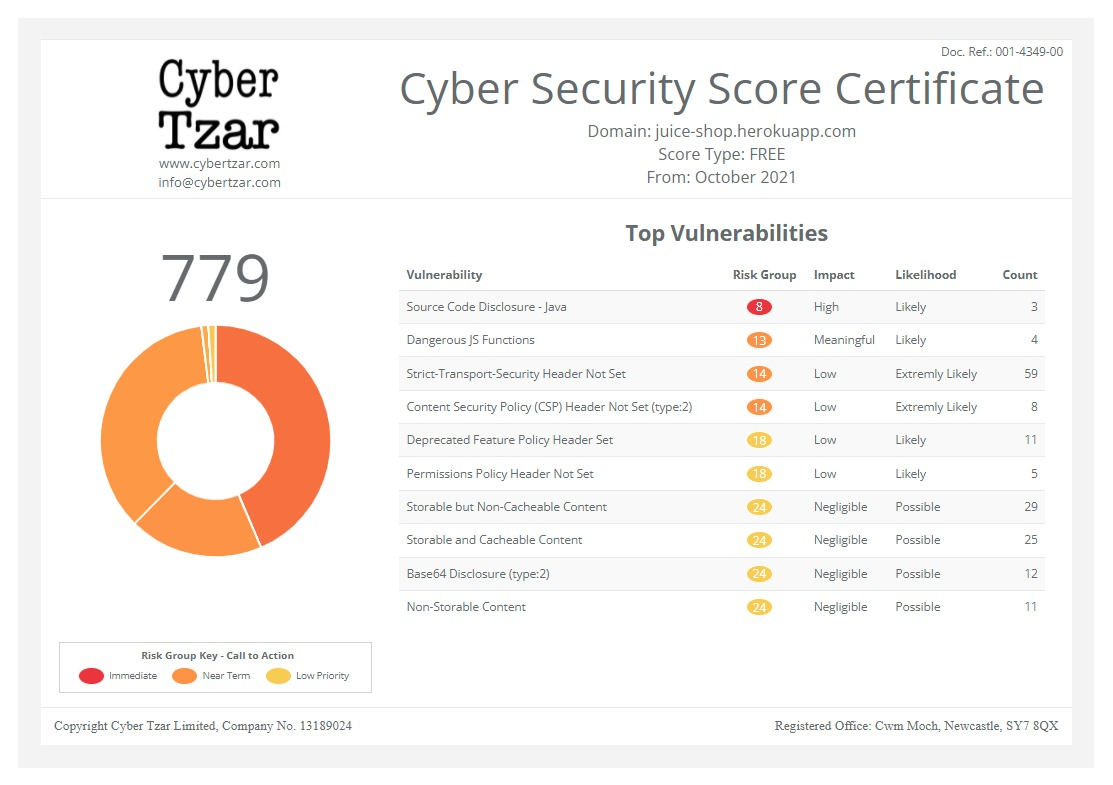

.Cyber Tzar

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.How to SLSA Part 1 - The Basics

published on 2022-04-12 16:00:00 UTC by UnknownContent:

Though it’s tempting to try to establish a single standard for how to use SLSA, it’s not possible: SLSA is not a line dance where everyone does the same moves, at the same time, to the same song. It’s a varied system with different styles, moves, and flourishes. The open source community, organizations, and consumers may all implement SLSA differently, but they can still work with each other.

In this three-part series, we’ll explore how three fictional organizations would apply SLSA to meet their different needs. In doing so, we will answer some of the main questions that newcomers to SLSA have:

Part 1: The basics

- How and when do you verify a package with SLSA?

- How to handle artifacts without provenance?

- Where is the provenance stored?

- Where is the appropriate policy stored and who should verify it?

- What should the policies check?

- How do you establish trust & distribute keys?

- What does a secure, heterogeneous supply chain look like?

The Situation

Our fictional example involves three organizations that want to use SLSA:Squirrel: a package manager with a large number of developers and users

Oppy: an open source operating system with an enterprise distribution

Acme: a mid sized enterprise.

Squirrel wants to make SLSA as easy for their users as possible, even if that means abstracting some details away. Meanwhile, Oppy doesn’t want to abstract anything away from their users under the philosophy that they should explicitly understand exactly what they’re consuming.

Acme is trying to produce a container image that contains three artifacts:

- The Squirrel package ‘foo’

- The Oppy package ‘baz’

- A custom executable, ‘bar’, written by Acme employees

Basics

In order to SLSA, Squirrel, Oppy, and Acme will all need SLSA capable build services. Squirrel wants to give their maintainers wide latitude to pick a builder service of their own. To support this, Squirrel will qualify some build services at specific SLSA levels (meaning they can produce artifacts up to that level). To start, Squirrel plans to qualify GitHub Actions using an approach like this, and hopes it can achieve SLSA 4 (pending the result of an independent audit). They’re also willing to qualify other build services as needed. Oppy on the other hand, doesn’t need to support arbitrary build services. They plan to have everyone use their Autobuilder network which they hope to qualify at SLSA 4 (they’ll conduct the audit/certification themselves). Finally, Acme plans to use Google Cloud Build which they’ll self-certify at SLSA 4 (pending the result of a Google-conducted audit).Squirrel, Oppy, and Acme will follow a similar qualification process for the source control systems they plan to support.

Verification options

Full verificationAt some point, one or more of the organizations will need to do full verification of each artifact to determine if it is acceptable for a given use case. This is accomplished by checking if the artifact meets the appropriate policy.

Typically, full verification would take place with SLSA provenance, source attestations, and perhaps other specialized attestations (like vulnerability scan results). While having to coordinate this data for all of its dependencies seems like a lot of work to Acme, they’re prepared to do full verification if Squirrel and Oppy are unable to.

Delegated verification

When Acme isn’t using full verification, they can instead use delegated verification where they check if an artifact is acceptable for a use case by checking if some other trusted party who performed a full verification (such as Squirrel or Oppy) believes the artifact is acceptable.

Delegated verification is easier to perform quickly with limited data and network connectivity. It may also be easier for some users who value if someone they trust verified the artifact is good.

Squirrel likes how easy delegated verification would make things for their users and plans to support it by creating a Verification Summary Attestation (VSA) when they perform full verification.

When to verify

Verification (full or delegated) could happen at a number of different times.

Verification (full or delegated) could happen at a number of different times.

On import to repo

Squirrel plans to perform full verification when an artifact is published to their repo. This will ensure that packages in the repo have met their corresponding policy. It’s also helpful because all the required data can be gathered when latency isn’t critical.

If this were the only time verification is performed, it would put the repository's storage in the trusted computing base (TCB) of its users. Squirrel’s plans to use delegated verification (and issue VSAs) can prevent this. The signature on the VSA will prevent the artifacts from being tampered with while sitting in storage, even if they’re just SLSA 0. Downstream users will just need to verify the VSA.

Acme also wants to do some sort of verification on the import to their internal repo since it simplifies their security story. They’re not quite sure what this will look like yet.

Squirrel plans to perform full verification when an artifact is published to their repo. This will ensure that packages in the repo have met their corresponding policy. It’s also helpful because all the required data can be gathered when latency isn’t critical.

If this were the only time verification is performed, it would put the repository's storage in the trusted computing base (TCB) of its users. Squirrel’s plans to use delegated verification (and issue VSAs) can prevent this. The signature on the VSA will prevent the artifacts from being tampered with while sitting in storage, even if they’re just SLSA 0. Downstream users will just need to verify the VSA.

Acme also wants to do some sort of verification on the import to their internal repo since it simplifies their security story. They’re not quite sure what this will look like yet.

On install

Acme also wants to do verification when an artifact is actually installed since it can remove a number of intermediaries from their TCB (their repo, the network, upstream storage systems).

If they perform full verification at install then they must gather all the required information. That could be a lot of data, but it might be simplified by gathering the data from external sources and caching it in their internal repo. A larger problem is that it requires Acme to have established trust in all parties that produced that information (e.g. every builder of every package). For a complex supply chain that may be difficult.

If Acme performs delegated verification, they only need the VSA for the packages being installed and to explicitly trust a handful of parties. This allows the complex full verification to be performed once while allowing all users of that package to perform a much simpler operation.

Given these tradeoffs Acme prefers delegated verification at install time. Squirrel also really likes the idea and plans to build install time verification directly into the Squirrel tool.

Acme also wants to do verification when an artifact is actually installed since it can remove a number of intermediaries from their TCB (their repo, the network, upstream storage systems).

If they perform full verification at install then they must gather all the required information. That could be a lot of data, but it might be simplified by gathering the data from external sources and caching it in their internal repo. A larger problem is that it requires Acme to have established trust in all parties that produced that information (e.g. every builder of every package). For a complex supply chain that may be difficult.

If Acme performs delegated verification, they only need the VSA for the packages being installed and to explicitly trust a handful of parties. This allows the complex full verification to be performed once while allowing all users of that package to perform a much simpler operation.

Given these tradeoffs Acme prefers delegated verification at install time. Squirrel also really likes the idea and plans to build install time verification directly into the Squirrel tool.

On use

Verification could also take place each time an artifact is actually used. In this model, latency and reliability are very important (a sudden increase in site traffic may necessitate a scaling operation launching many new jobs).

Time of use verification allows the most context with which decisions can be made (“is this job allowed to run this code and is it free from vulns right now?”). It also allows policy changes to affect already built & installed software (which may or may not be desirable).

Acme wants their users to be able to verify on use without too many dependencies so they plan to provide VSAs users can use to perform delegated verification when they start the container (perhaps using something like Kyverno).

How to handle artifacts without provenance?

Inevitably a build or system may require that an artifact without ‘original’ provenance is used. In these cases it may be desirable for the importer to generate provenance that details where it got this artifact. For example, this generated provenance shows that http://example.com/foo.tgz with sha256:abc was imported by ‘auto-importer’:Such an artifact would likely not be accepted at higher SLSA levels, but the provenance can be used to: 1) prevent tampering with the artifact after it’s been imported and 2) be a data point for future analysis (e.g. should we prioritize asking for foo.tgz to be distributed with native SLSA provenance?).

Acme might be interested in taking this approach at some point, but they don’t need it at the moment.

Next time

In our next post we’ll cover specific approaches that can be used to answer questions like “where should attestations and policies be stored?” and “how do I trust the attestations that I receive?”http://security.googleblog.com/2022/04/how-to-slsa-part-1-basics.html

Published: 2022 04 12 16:00:00

Received: 2022 07 09 03:11:50

Feed: Google Online Security Blog

Source: Google Online Security Blog

Category: Cyber Security

Topic: Cyber Security

Views: 11