Cyber Security News Aggregator

.Cyber Tzar

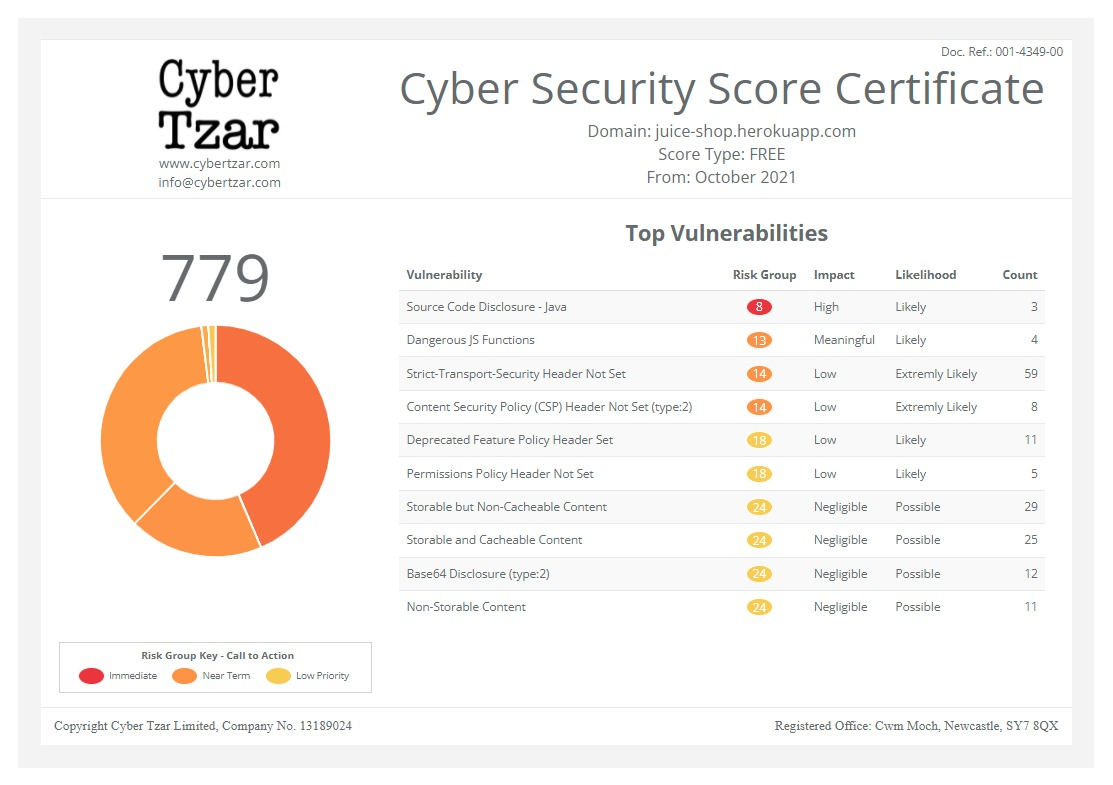

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Security Risks of AI

published on 2023-04-27 13:38:09 UTC by Bruce SchneierContent:

Stanford and Georgetown have a new report on the security risks of AI—particularly adversarial machine learning—based on a workshop they held on the topic.

Jim Dempsey, one of the workshop organizers, wrote a blog post on the report:

As a first step, our report recommends the inclusion of AI security concerns within the cybersecurity programs of developers and users. The understanding of how to secure AI systems, we concluded, lags far behind their widespread adoption. Many AI products are deployed without institutions fully understanding the security risks they pose. Organizations building or deploying AI models should incorporate AI concerns into their cybersecurity functions using a risk management framework that addresses security throughout the AI system life cycle. It will be necessary to grapple with the ways in which AI vulnerabilities are different from traditional cybersecurity bugs, but the starting point is to assume that AI security is a subset of cybersecurity and to begin applying vulnerability management practices to AI-based features. (Andy Grotto and I have vigorously argued against siloing AI security in its own governance and policy vertical.)

Our report also recommends more collaboration between cybersecurity practitioners, machine learning engineers, and adversarial machine learning researchers. Assessing AI vulnerabilities requires technical expertise that is distinct from the skill set of cybersecurity practitioners, and organizations should be cautioned against repurposing existing security teams without additional training and resources. We also note that AI security researchers and practitioners should consult with those addressing AI bias. AI fairness researchers have extensively studied how poor data, design choices, and risk decisions can produce biased outcomes. Since AI vulnerabilities may be more analogous to algorithmic bias than they are to traditional software vulnerabilities, it is important to cultivate greater engagement between the two communities.

Another major recommendation calls for establishing some form of information sharing among AI developers and users. Right now, even if vulnerabilities are identified or malicious attacks are observed, this information is rarely transmitted to others, whether peer organizations, other companies in the supply chain, end users, or government or civil society observers. Bureaucratic, policy, and cultural barriers currently inhibit such sharing. This means that a compromise will likely remain mostly unnoticed until long after attackers have successfully exploited vulnerabilities. To avoid this outcome, we recommend that organizations developing AI models monitor for potential attacks on AI systems, create—formally or informally—a trusted forum for incident information sharing on a protected basis, and improve transparency.

https://www.schneier.com/blog/archives/2023/04/security-risks-of-ai.html

Published: 2023 04 27 13:38:09

Received: 2023 04 27 14:03:51

Feed: Schneier on Security

Source: Schneier on Security

Category: Cyber Security

Topic: Cyber Security

Views: 12