Cyber Security News Aggregator

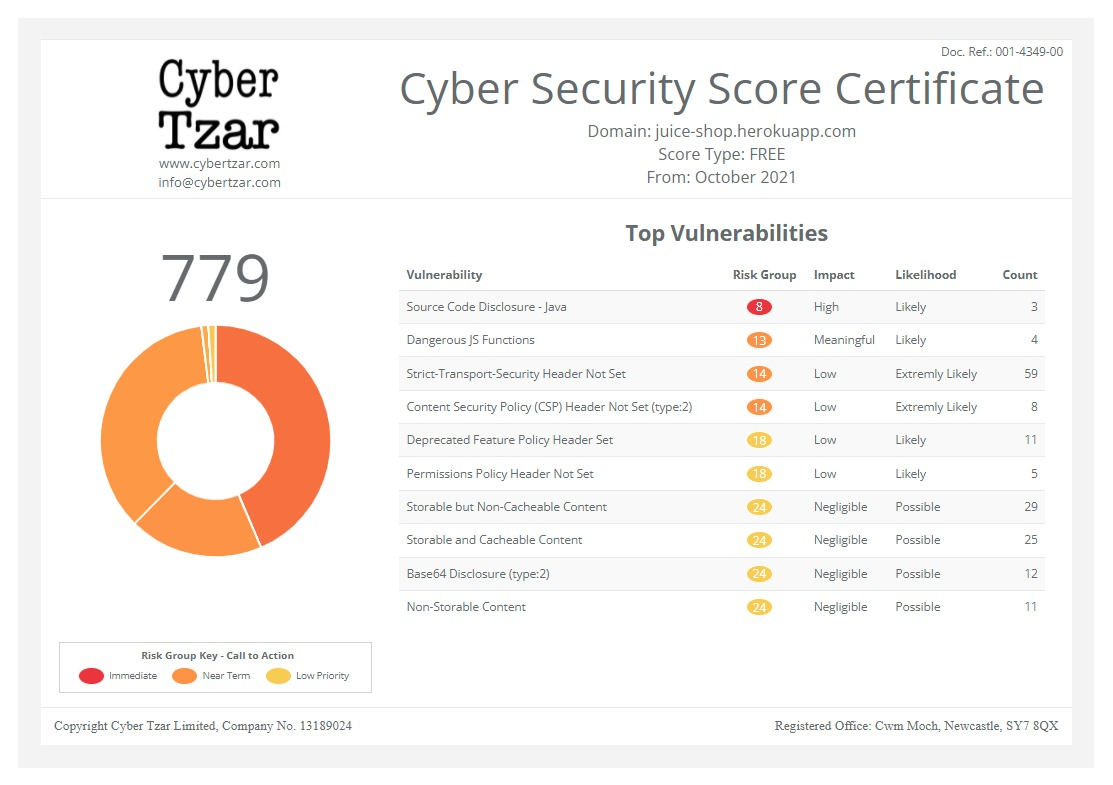

.Cyber Tzar

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Indirect Instruction Injection in Multi-Modal LLMs

published on 2023-07-28 11:06:35 UTC by Bruce SchneierContent:

Interesting research: “(Ab)using Images and Sounds for Indirect Instruction Injection in Multi-Modal LLMs“:

Abstract: We demonstrate how images and sounds can be used for indirect prompt and instruction injection in multi-modal LLMs. An attacker generates an adversarial perturbation corresponding to the prompt and blends it into an image or audio recording. When the user asks the (unmodified, benign) model about the perturbed image or audio, the perturbation steers the model to output the attacker-chosen text and/or make the subsequent dialog follow the attacker’s instruction. We illustrate this attack with several proof-of-concept examples targeting LLaVa and PandaGPT.

https://www.schneier.com/blog/archives/2023/07/indirect-instruction-injection-in-multi-modal-llms.html

Published: 2023 07 28 11:06:35

Received: 2023 07 28 11:23:26

Feed: Schneier on Security

Source: Schneier on Security

Category: Cyber Security

Topic: Cyber Security

Views: 4