Cyber Security News Aggregator

.Cyber Tzar

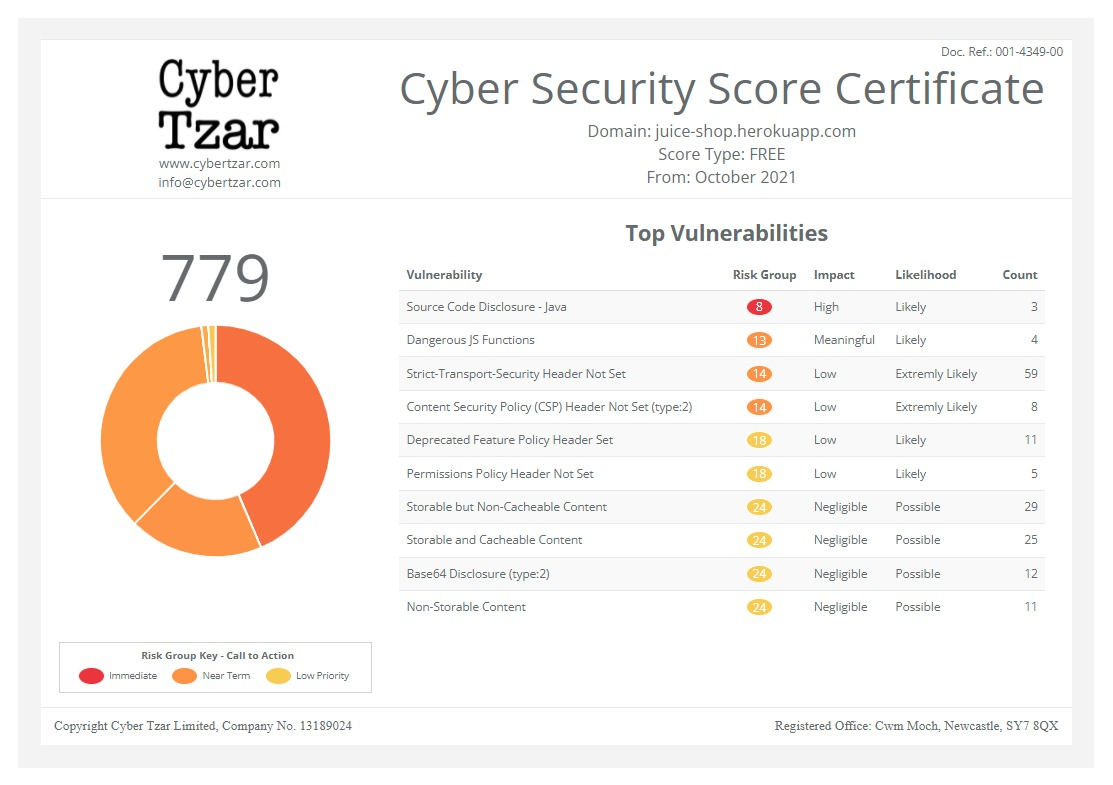

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Prime Minister launches new AI Safety Institute

published on 2023-11-06 10:38:27 UTC by Rebecca KnowlesContent:

A new global hub based in the UK and tasked with testing the safety of emerging types of AI, the AI Safety Institute has been backed by leading AI companies and nations.

After four months of building the first team inside a G7 Government that can evaluate the risks of frontier AI models, it has been confirmed that the Frontier AI Taskforce will evolve to become the AI Safety Institute, with Ian Hogarth continuing as its Chair.

The External Advisory Board for the Taskforce, made up of industry heavyweights from national security to computer science, will now advise the new global hub.

AI Safety Institute to test new AI models before release

The Institute will carefully test new types of frontier AI before and after they are released to address the potentially harmful capabilities of AI models, including exploring all the risks, from social harms like bias and misinformation, to the most unlikely but extreme risk, such as humanity losing control of AI completely.

In undertaking this research, the AI Safety Institute will look to work closely with the Alan Turing Institute, as the national institute for data science and AI.

The Government has said that in launching the AI Safety Institute, “the UK is continuing to cement its position as a world leader in AI safety, working to develop the most advanced AI protections of any country in the world and giving the British people peace of mind that the countless benefits of AI can be safely captured for future generations to come.”

World leaders and major AI companies expressed their support for the Institute as the world’s first AI Safety Summit concluded on 2 November. From Japan and Canada to OpenAI and DeepMind, the collective backing of key players will strengthen international collaboration on the safe development of frontier AI – putting the UK in prime position to become the home of AI safety and lead the world in seizing its enormous benefits.

Leading researchers at the Alan Turing Institute and Imperial College London have also welcomed the Institute’s launch, alongside representatives of the tech sector in TechUK and the Startup Coalition.

UK agrees two partnerships

Already, the UK has agreed two partnerships: with the US AI Safety Institute, and with the Government of Singapore to collaborate on AI safety testing – two of the world’s biggest AI powers.

Deepening the UK’s stake and influence in this transformative technology, it will also advance the world’s knowledge of AI safety – with the Prime Minister committing to invest in its safe development for the rest of the decade, as part of the Government’s record investment into R&D.

Prime Minister Rishi Sunak said: “Our AI Safety Institute will act as a global hub on AI safety, leading on vital research into the capabilities and risks of this fast-moving technology.

“It is fantastic to see such support from global partners and the AI companies themselves to work together so we can ensure AI develops safely for the benefit of all our people. This is the right approach for the long-term interests of the UK.”

Secretary of State for Science, Innovation, and Technology, Michelle Donelan said: “The AI Safety Institute will be an international standard bearer. With the backing of leading AI nations, it will help policymakers across the globe in gripping the risks posed by the most advanced AI capabilities, so that we can maximise the enormous benefits.

“We have spoken at length about the Summit at Bletchley Park being a starting point, and as we reach the final day of discussions, I am enormously encouraged by the progress we have made and the lasting processes we have set in motion.”

The launch of the AI Safety Institute marked the UK’s contribution to the collaboration on AI safety testing agreed by world leaders and the companies developing frontier AI at a session in Bletchley Park.

New details revealed at AI Safety Summit

New details revealed at the AI Safety Summit on 2 November, set out the body’s mission to prevent surprise to the UK and humanity from rapid and unexpected advances in AI. Ahead of new powerful models expected to be released next year whose capabilities may not be fully understood, its first task will be to quickly put in place the processes and systems to test them before they launch – including open-source models.

From its research informing UK and international policymaking, to providing technical tools for governance and regulation – such as the ability to analyse data being used to train these systems for bias – it will see the government take action to make sure AI developers are not marking their own homework when it comes to safety.

AI Safety Institute Chair Ian Hogarth, said: “The support of international governments and companies is an important validation of the work we’ll be carrying out to advance AI safety and ensure its responsible development.

“Through the AI Safety Institute, we will play an important role in rallying the global community to address the challenges of this fast-moving technology.”

Researchers in place

Researchers are already in place to head up the work of the Institute who will be provided with access to the compute needed to support their work. This includes making use of the new AI Research Resource, an expanding £300 million network that will include some of Europe’s largest super computers, increasing the UK’s AI super compute capacity by a factor of thirty.

It follows the UK Government’s announcement of additional investment in Bristol’s “Isambard-AI” and a new computer called “Dawn” in Cambridge, that researchers will be able to access at the same time to boost their research and make AI safe. The AI Safety Institute will have priority access to this “cutting-edge supercomputer” to help develop its programme of research into the safety of frontier AI models and supporting government with this analysis.

It comes as government representatives were joined by CEOs of leading AI companies and a number of civil society leaders to discuss the year ahead and consider what immediate steps are needed – by countries, companies, and other stakeholders – to ensure the safety of frontier AI.

As the final day of talks came to a close at Bletchley Park, the AI Safety Summit had already laid the foundations for talks on frontier AI safety to be an enduring discussion with South Korea set to host next year.

https://securityjournaluk.com/prime-minister-launches-new-ai-safety-institute/

Published: 2023 11 06 10:38:27

Received: 2023 11 06 17:47:46

Feed: Security Journal UK

Source: Security Journal UK

Category: Security

Topic: Security

Views: 13