Welcome to our

Cyber Security News Aggregator

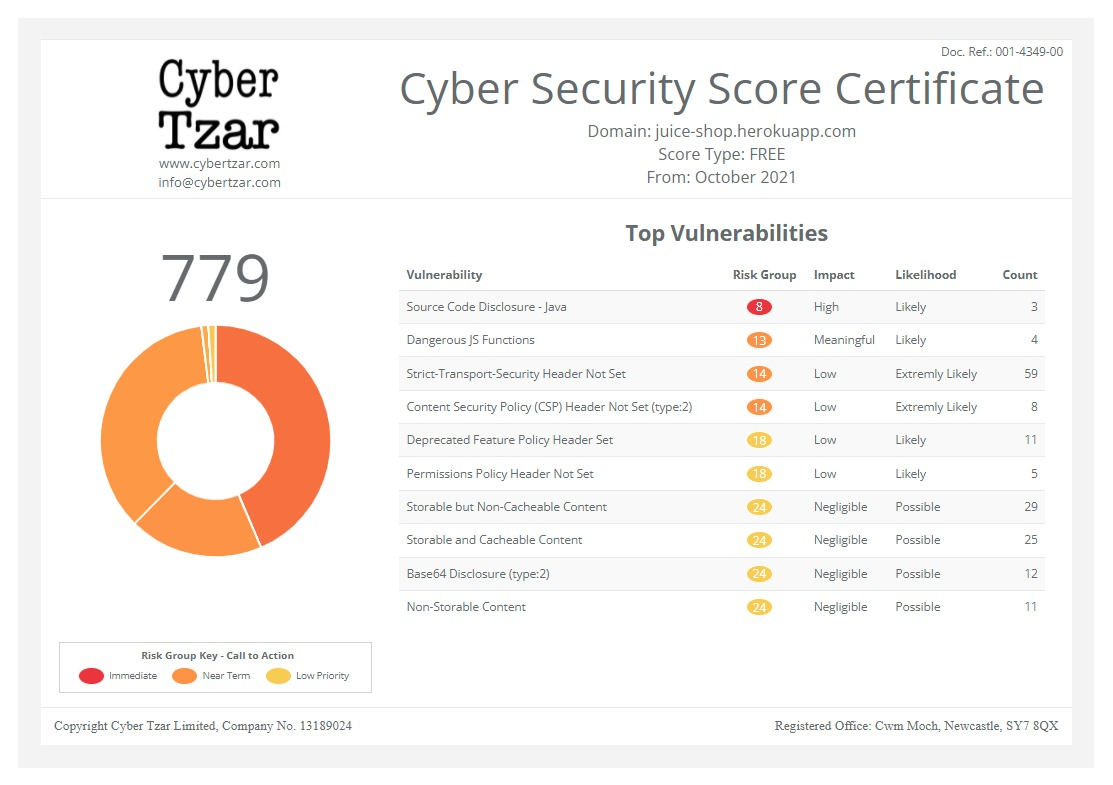

.Cyber Tzar

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Jailbreaking LLMs with ASCII Art

published on 2024-03-12 11:12:15 UTC by Bruce SchneierContent:

Researchers have demonstrated that putting words in ASCII art can cause LLMs—GPT-3.5, GPT-4, Gemini, Claude, and Llama2—to ignore their safety instructions.

Research paper.

https://www.schneier.com/blog/archives/2024/03/jailbreaking-llms-with-ascii-art.html

Published: 2024 03 12 11:12:15

Received: 2024 03 12 11:24:41

Feed: Schneier on Security

Source: Schneier on Security

Category: Cyber Security

Topic: Cyber Security

Views: 20