Cyber Security News Aggregator

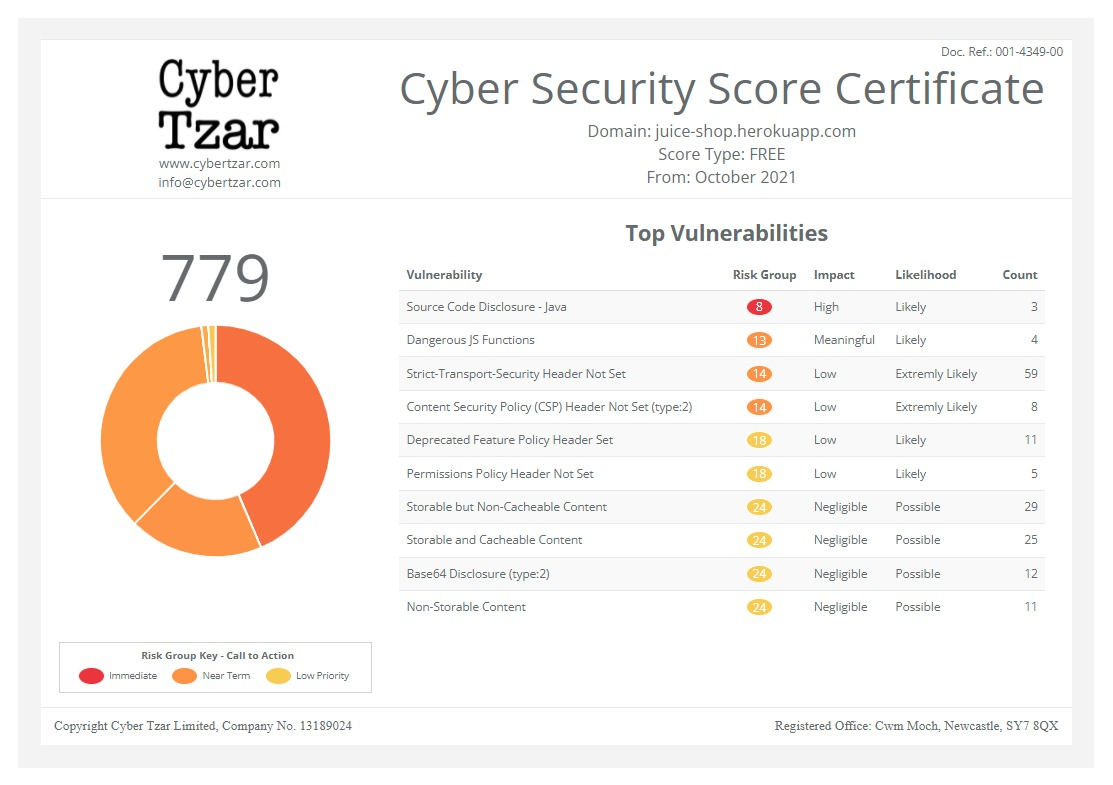

.Cyber Tzar

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Innovation and Security: The challenges of generative AI for enterprises

published on 2024-04-09 08:40:26 UTC by James HumphreysContent:

Ravi Pather, VP EME, Ericom Security by Cradlepoint discusses the benefits of generative AI in business and the risk associated.

Despite what some might think, no, bots probably won’t come looking for your job, but they may well attack your intellectual property.

Generative AI (GenAI) has become a transformative technology for businesses in more ways than one.

In 2024, its widespread adoption will undoubtedly have an impact on organisations across all industries, resulting in increased productivity and efficiency.

However, GenAI can be a double-edged sword. Organisations need to tread carefully when assessing organisation security risk, especially when it comes to data protection.

Research conducted by Harvard Business School in 2023 showed that implementing Generative AI could increase employee productivity by up to 40%, while introducing new data security challenges.

One main concern: employees who use GenAI to perform work tasks on a daily basis may unintentionally expose sensitive data to the technology’s Large Language Models (LLMs).

Today, in addition to the many other security issues that organisations need to be aware of, they need to protect themselves from the potential threats posed by this powerful and prolific tool.

The Benefits of Generative AI in Business

Generative AI stands out for its remarkable ability to generate content, automate software development, improve customer interactions through chatbots and optimise support operations.

According to Gartner, an overwhelming majority of companies have already begun to integrate this technology into their processes, demonstrating its transformative potential.

Gartner predicts that within two years, more than 80% of companies will likely use APIs (application programming interfaces) and Generative AI models or deploy dedicated applications for their production environments, up from less than 5% last year.

The Risks Associated with Generative AI

However, the integration of Generative AI into business practices is not without significant concerns.

The ease with which employees can access and use these tools increases the risk of accidentally exposing confidential information, a concern exacerbated by the ability of these systems to process vast amounts of data.

In addition, the formation of these systems on data available online raises the legitimate questions around copyright and intellectual property.

Biases presented in the data can also lead to questionable results, highlighting the need for an ethical and critical approach in the deployment of Generative AI.

Security Solutions for Generative AI

The rapid increase in the use of these technologies in the enterprise underscores the urgency of developing security solutions that keep up with this growth.

The implementation of data loss prevention solutions based on zero trust technology offers a promising approach, enabling the secure use of Generative AI.

With solutions such as Cradlepoint’s Generative AI Isolation, organisations can securely enable the use of Generative AI across the enterprise without risking their data and integrity.

Zero trust architecture, which air gaps use of GenAI apps in secure, isolated cloud containers, provides true protection. Organisations can easily implement this clientless solution to set access policies for users rather than preventing use of GenAI sites.

For example, organisations can block users from entering personally identifiable information (PII) or prevent them from using the copy/paste function, which risks sensitive corporate data flowing into LLMs and shared outside the organisation.

In addition, GenAI isolation also protects users’ devices and corporate networks from any malware generated by a GenAI tool or transmitted from a malicious source.

Generative AI represents a unique opportunity for business innovation, but it also requires special attention to the security risks it can generate.

By adopting advanced security strategies, organisations can harness the full potential of this technology while ensuring the protection of their most valuable assets.

For Australian organisations, the balance between innovation and security will become the key to successfully navigating the digital future.

https://securityjournaluk.com/the-challenges-generative-ai-for-enterprises/

Published: 2024 04 09 08:40:26

Received: 2024 04 09 08:46:48

Feed: Security Journal UK

Source: Security Journal UK

Category: Security

Topic: Security

Views: 27