Cyber Security News Aggregator

.Cyber Tzar

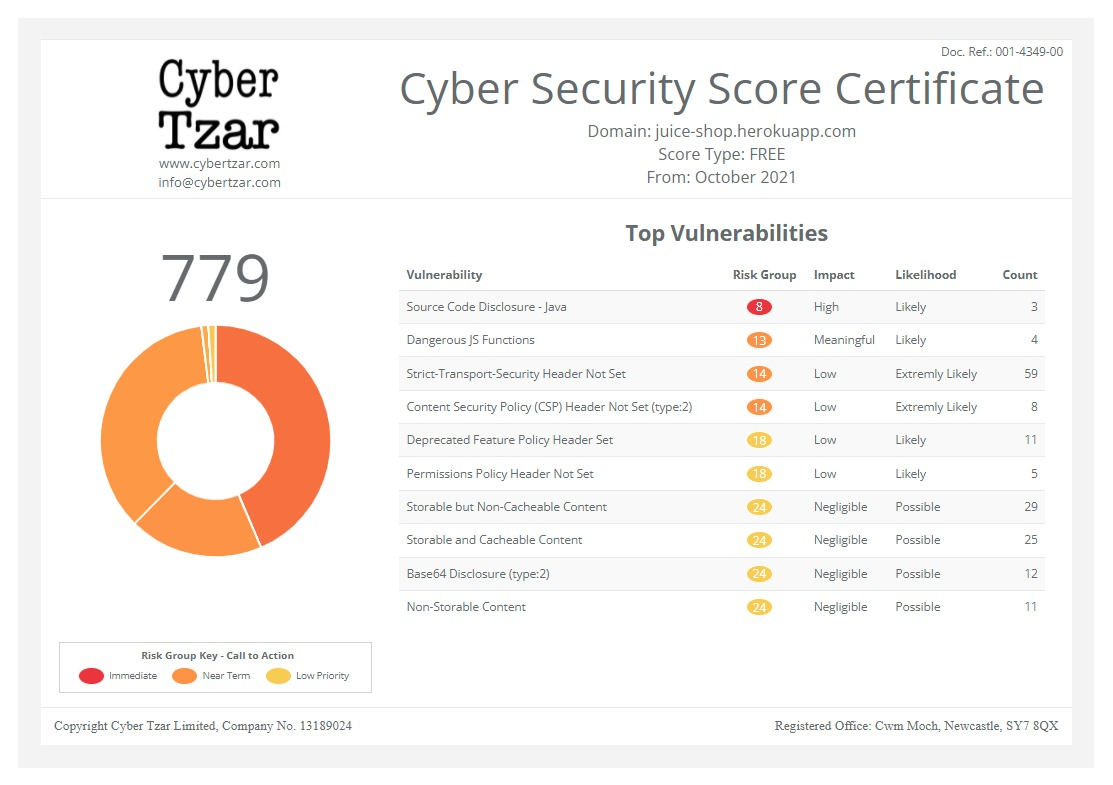

provide acyber security risk management

platform; including automated penetration tests and risk assesments culminating in a "cyber risk score" out of 1,000, just like a credit score.Building trust in generative AI with a zero trust approach

published on 2024-04-10 07:45:00 UTC by James HumphreysContent:

Tim Freestone, Chief Strategy and Marketing Officer, Kiteworks speaks about the different approaches used to build trust when it comes to generative AI.

As generative AI continues to evolve and create increasingly sophisticated synthetic content, ensuring trust and integrity becomes vital.

This is where a zero-trust security approach comes in.

One that combines cybersecurity principles, authentication safeguards and content policies to create responsible and secure generative AI systems that can benefit us all.

But what is it that Zero Trust Generative AI entails?

Why could it represent the future of AI safety?

How do you set about implementing it?

And what challenges could you face?

What does Zero Trust Generative AI entail?

Zero trust generative AI integrates two key concepts: the zero trust security model and generative AI capabilities.

The zero trust model operates on the principle of maintaining rigorous verification, never assuming trust, but rather confirming every access attempt and transaction.

This shift away from implicit trust is crucial in the new remote and cloud-based computing era in which we now live.

Generative AI refers to a class of AI systems that can autonomously create new, original content like text, images, audio, video and more based on their training data.

This ability to synthesise novel, realistic artefacts has grown enormously in recent years in line with recent algorithmic advances.

Fusing these two concepts together prepares generative AI models for emerging threats and vulnerabilities through proactive security measures woven throughout their processes from data pipelines to user interaction.

It could not be more important, as it provides multifaceted protection against misuse at a time when generative models are acquiring unprecedented creative capacity.

Why it represents the future of AI safety

As generative models rapidly increase in sophistication and realism, so too does their potential for harm if misused or poorly designed, whether intentionally or not.

Vulnerabilities or gaps could enable bad actors to exploit these systems to spread misinformation, produce content designed to mislead, or forge dangerous and unethical material on a wide scale.

Of course, even the most well-intentioned systems may struggle to fully avoid ingesting biases and falsehoods during data collection or reinforce them inadvertently.

Moreover, the authenticity and provenance of often strikingly realistic outputs can be challenging to verify without rigorous mechanisms.

This combination underscores the necessity of securing generative models through practices like the zero trust approach.

Implementing its principles provides vital safeguards by thoroughly validating system inputs, monitoring ongoing processes, inspecting outputs and credentialing access through every stage to mitigate risks and prevent potential exploitation routes.

This protects public trust and confidence in AI’s societal influence.

How to set about implementing Zero Trust Generative AI

Constructing a Zero Trust framework for generative AI encompasses several practical actions across architectural design, data management, access controls and more.

Key measures involve:

1. Authentication and Authorisation: verify all user identities unequivocally and restrict access permissions to only those required for each user’s authorised roles. Apply protocols like multi-factor authentication (MFA) universally

2. Data Source Validation: confirm integrity of all training data through detailed logging, auditing trails, verification frameworks, and oversight procedures. Continuously evaluate datasets for emerging issues

3. Process Monitoring: actively monitor system processes using rules-based anomaly detection, machine learning models and other quality assurance tools for suspicious activity

4. Output Screening: automatically inspect and flag outputs that violate defined ethics, compliance or policy guardrails, facilitating human-in-the-loop review

5. Activity Audit: rigorously log and audit all system activity end-to-end to maintain accountability. Support detailed tracing of generated content origins

The importance of content layer security

While access controls provide the first line of defence in Zero Trust Generative AI, comprehensive content layer policies constitute the next crucial layer of protection.

This expands oversight from what users can access to what data an AI system itself can access, process, or disseminate irrespective of credentials.

Key aspects include:

1. Content Policies: define policies restricting access to prohibited types of training data, sensitive personal information or topics posing heightened risks if synthesised or propagated. Continuously refine rulesets

2. Data Access Controls: implement strict access controls specifying which data categories each AI model component can access based on necessity and risk levels

3. Compliance Checks: perform ongoing content compliance checks using automated tools plus human-in-the-loop auditing to catch policy and regulatory compliance violations

4. Data Traceability: maintain clear audit trails with granular audit logs for high fidelity tracing of the origins, transformations and uses of data flowing through generative AI architectures

This holistic content layer oversight further cements comprehensive protection and accountability throughout generative AI systems.

What challenges you could face

While crucial for responsible AI development and building public trust, putting zero trust generative AI into practice faces an array of challenges spanning technology, policy, ethics and operational domains.

On the technical side, rigorously implementing layered security controls across sprawling machine learning pipelines without degrading model performance poses non-trivial complexities for engineers and researchers.

Substantial work is essential to develop effective tools and integrate them smoothly.

Additionally, balancing powerful content security, authentication and monitoring measures while retaining the flexibility for ongoing innovation represents a delicate trade-off requiring care and deliberation when crafting policies or risk models.

Overly stringent approaches may constrain beneficial research directions or creativity.

Further challenges emerge in value-laden considerations surrounding content policies, from charting the bounds of free speech to grappling with biases encoded in training data. Importing existing legal or social norms into automated rulesets also proves complex.

These issues necessitate actively consulting diverse perspectives and revisiting decisions as technology and attitudes coevolve.

Surmounting these multifaceted hurdles requires sustained, coordinated efforts across various disciplines.

The road ahead for trustworthy AI

As generative AI continues rapidly advancing in step with the growing ubiquity of the technology throughout society, zero trust principles deeply embedded throughout generative architectures offer a proactive path to enabling accountability, safety and control over these exponentially accelerating technologies.

Constructive policy guidelines, appropriate funding and governance supporting research in this direction can catalyse progress towards ethical, secure and reliable generative AI worthy of public confidence.

With diligence and cooperation across private institutions and government bodies, this comprehensive security paradigm paves the way for realising generative AI’s immense creative potential responsibly for the benefit of all.

In an era where machine-generated media holds increasing influence over how we communicate, consume information and even perceive reality, ensuring the accountability of emerging generative models becomes paramount.

By holistically integrating zero trust security–spanning authentication, authorisation, data validation, process oversight and output controls can pre-emptively safeguard these systems against misuse and unintended harm, uphold ethical norms and build essential public trust in AI.

Achieving this will require sustained effort and collaboration across technology pioneers, lawmakers, and civil society, though.

However, the payoff will be AI’s progress to be unimpeded by lapses in security or safety.

Meaning, it can flourish in step with human values.

With a Private Content Network, organisations can protect their sensitive content from AI leaks.

The best provide content-defined zero trust controls, featuring least-privilege access defined at the content layer and next-gen DRM capabilities that block downloads from AI ingestion.

They also employ AI to detect anomalous activity – for example, sudden spikes in access, edits, sends and shares of sensitive content.

Unifying governance, compliance and security of sensitive content communications on the Private Content Network makes this AI activity across sensitive content communication channels easier and faster.

https://securityjournaluk.com/building-trust-in-generative-ai-zero-trust/

Published: 2024 04 10 07:45:00

Received: 2024 04 10 07:48:33

Feed: Security Journal UK

Source: Security Journal UK

Category: Security

Topic: Security

Views: 17